The invention of calculus stands as one of the most transformative moments in human thought, not because it introduced new numbers, but because it taught humanity how to measure change itself. Before calculus, mathematics was largely static. Geometry described shapes frozen in space, arithmetic counted objects at rest, and algebra manipulated symbols that represented fixed quantities. Yet the real world was anything but still. Planets moved, objects fell, populations grew, and heat flowed. The great challenge was not describing things as they were, but as they became.

This problem haunted scholars for centuries. Ancient Greek thinkers such as Zeno had already grappled with paradoxes of motion and infinity, questioning how something could move through infinitely many points in finite time. Medieval mathematicians refined techniques for approximating areas and slopes, but these methods were fragmented and limited. What was missing was a unified mathematical language capable of handling continuous change without collapsing under the weight of infinity.

That breakthrough came in the late seventeenth century through the independent work of two remarkable minds: Isaac Newton and Gottfried Wilhelm Leibniz. Although they approached the problem differently, both arrived at the same revolutionary idea: change could be broken down into infinitely small parts and then recombined into meaningful results. In doing so, they turned infinity from a philosophical nightmare into a practical tool.

Newton’s version of calculus grew out of physics. He was obsessed with motion, velocity, and acceleration, especially as he tried to explain the movement of planets and falling objects. To him, quantities were constantly “flowing,” and calculus was a way to track their rates of change over time. This method became essential to his formulation of classical mechanics, most famously presented in Philosophiæ Naturalis Principia Mathematica. Though Newton used calculus extensively, he expressed it geometrically, wary of criticism surrounding infinite quantities.

Leibniz, on the other hand, focused on symbolism and clarity. He introduced the notation still used today, such as the elongated “S” for integrals and the “d” to represent infinitesimal differences. His insight was that complex curves could be understood as collections of infinitely small straight segments, and areas could be computed by summing infinitely thin slices. This elegant notation made calculus easier to learn, share, and expand, ensuring its rapid spread across Europe.

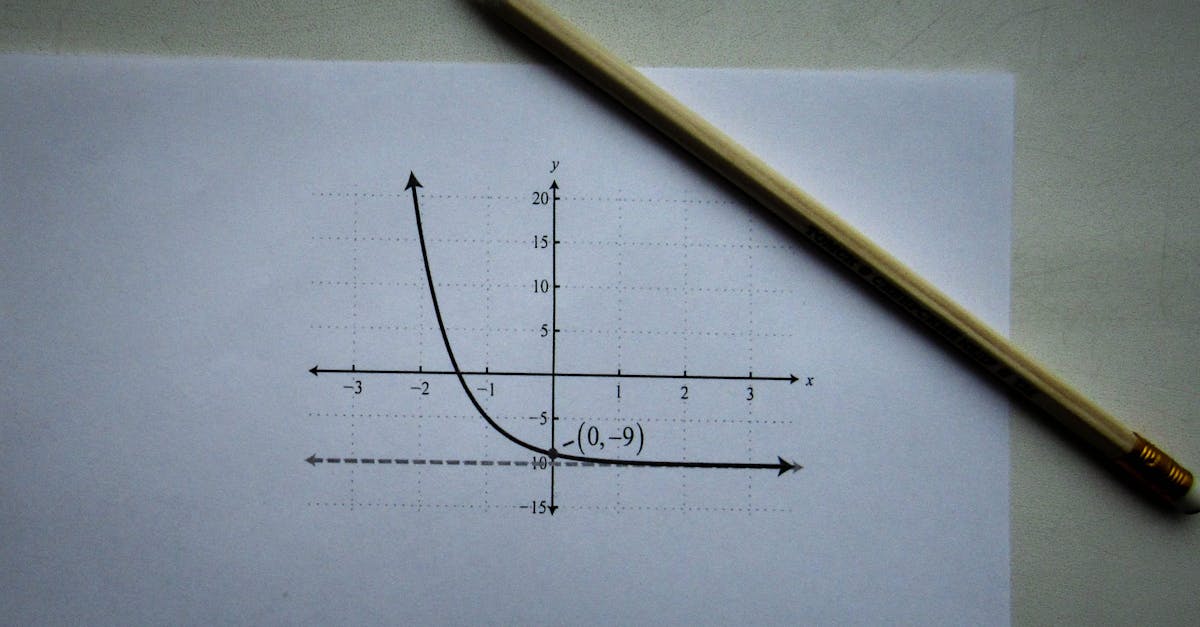

At its heart, calculus rests on two intertwined ideas: differentiation and integration. Differentiation answers the question of how fast something is changing at a precise instant, whether it is the speed of a car, the growth of a population, or the slope of a curve at a single point. Integration reverses the process, allowing mathematicians to accumulate infinitely small contributions into totals, such as distance traveled, area under a curve, or total energy used. The astonishing realization that these two processes are deeply connected is known as the fundamental theorem of calculus, a result that unified motion and accumulation into one coherent framework.

One easily forgotten aspect of calculus is how controversial it initially was. The notion of infinitesimals—quantities smaller than any measurable amount yet not exactly zero—troubled philosophers and mathematicians alike. Critics questioned whether calculus rested on logical foundations or clever tricks. It would take centuries, and the development of rigorous limits in the nineteenth century, to place calculus on a solid formal footing.

Yet despite these early doubts, calculus reshaped science, engineering, economics, and technology. It allowed humanity to predict eclipses, design bridges, model epidemics, and eventually build computers and spacecraft. By making motion, change, and infinity measurable, calculus gave us a way to understand a dynamic universe with mathematical precision. More than a collection of techniques, it represents a profound shift in how humans describe reality itself.